The Dark Side

With every technological advancement criminals find a way to advance their crimes with it

Technological advancements promise to make our lives better and makes us want something today, we did not know we needed yesterday. The internet promised more efficient work and email that would replace snail mail. It also led to the creation of phishing and online scams that was just a new way to commit an old fashioned robbery, theft or deception. Even the invention of trains, which revolutionized transportation,1 led to the great train robbery.

These are the dual-use technologies that the federal government even requires a special review before any on the list are exported. Artificial intelligence can be exported if they fall within the exceptions that involve factors of whether it has already been published, and the extent to which it has been “trained.”2

With the introduction of artificial intelligence to the global community, the dark side of humanity is busy with adopting it to better commit old crimes and to invent new ones. An online financial security consulting firm, TRM described this evolution as follows:

At first, these organizations turned to AI to aid their human-led criminal efforts, translating phishing scripts into multiple languages or scanning vast codebases for exploitable vulnerabilities. These early applications mirror legitimate uses of AI, but are weaponized to devastating effect.

The next frontier for criminals is autonomy. Just as corporations strive to automate tasks, cybercriminals can develop AI agents capable of operating completely independently. These agents could identify and exploit vulnerabilities without human oversight — executing complex objectives, such as hacking critical infrastructure (e.g. water treatment plants).

This evolution doesn’t just change the scale of criminal operations, it fundamentally reshapes them — making them faster, more efficient, and alarmingly difficult to detect or counteract.3

I am going to narrow this examination to cybercrimes, since artificial intelligence lives on the web and this is its origin.

1. AI-Powered Ransomware

Previously, creating ransomware required some coding talent and 3-5 years of training. With “vibe” coding, an account holder with Claude.ai used it “to develop, market, and distribute several variants of ransomware, each with advanced evasion capabilities, encryption, and anti-recovery mechanisms.” The ransomware collected personal data and threated to make it public on the internet, rather than simply encrypting the data. Ransom requests as much as half a million dollars. The accountholder sold these ransomware packages on internet forums for $400 -1,200.4 This represents a giant leap in the use of sophisticated ransomware that can be created by just about anyone.

2. Deep Fakes and Financial Fraud

A company employee was deceived by a deep fake video call that appeared to be the company’s CFO. The employee transferred $25 million to the perpetrators.5 In another case, a Hong Kong firm also lost $25 million to a deepfake scam impersonating the company’s Chief Financial Officer after being duped into attending a very realistic video call where the CFO was present along with other employees — all deep fakes.6 This is a major threat according to consulting company, Tech Advisors, having found that only 71% of people globally know what is a deep fake and only 0.1% can identify a deep fake.7

3. AI-Generated Phishing Campaigns

By April 2025, at least 14% of focused email attacks were generated using large language models (LLMs), up from 7.6% in April 2024. Additionally, 82.6% of phishing emails now use AI technology in some form, and generative AI tools help hackers compose phishing emails up to 40% faster.8

4. Post-exploitation malware

The post exploitation strategy has shifted to continue by using AI tools to generate commands to continue the attacks on the victim’s computer. In one example in August 2025, a JavaScript upload was used to change the computer’s own local command line interface (CLI) such as Gemini or Grok, and directed it to steal authentication codes and cryptocurrency assets.9

Prosecuting Crimes using Artificial Intelligence

Technology advances that aid in the commission of crimes are of two types: (1) crimes that still meet the definition of a criminal statute or common law crime, but are enhanced, made faster or more frequent; or (2) an entirely new crime using the technology that does not squarely fit the definition of any crime and may require new legislation to address the threat to society.

Members of legislatures first have to understand these crimes, see the need and make legislation a priority to address new crimes. Most prosecutions so far based on the above examples (1-3) have charged perpetrators under existing fraud, computer crime, and extortion laws rather than under any AI-specific statutes. The example in number 4, might be charged as “unauthorized access to a computer” punished by 1-10 years in prison, and up to 20 years depending on the extent of the damage caused. Specific, AI cybercrime legislation is going to be needed.

In February 2024, the Deputy Attorney General, Lisa Monaco, announced a new initiative, Justice AI. The new initiative would have DOJ prosecutors asking for stricter sentences for perpetrators who use artificial intelligence in the commission of their crimes.10

Internationally, in 2024, a conference with twelve technology companies, the International Criminal Court discussed AI’s impact on crimes, like foreign influence operations, digital surveillance, and attacks on civilian infrastructures. Suggestions of a stronger international legal framework using a global model statute might be one solution.11

Final thoughts

We are in the early stages of understanding the types of cybercrimes that will grow from artificial intelligence. But we are well into seeing what is possible with the enhanced scale, speed and efficiency in traditional cybercrimes but not far enough to know the new crimes that will emerge. Perhaps by the end of 2026 we will have a better picture of the range of what is possible.

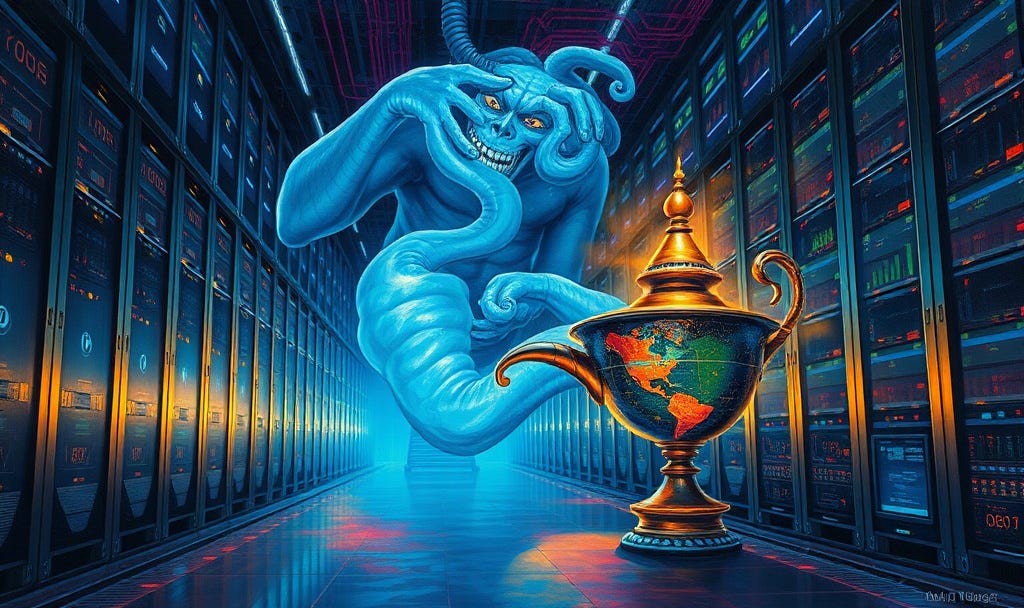

When we let the genie out of the bottle with the release of ChatGPT, we also let the evil genie out of the bottle. Like all genies, they are both impossible to put back into the bottle.

https://www.aar.org/chronology-of-americas-freight-railroads

§ 734.7 of the EAR, 4E091 at https://www.bis.gov/regulations/ear/774#supplement-1-774

https://www.trmlabs.com/resources/blog/the-rise-of-ai-enabled-crime-exploring-the-evolution-risks-and-responses-to-ai-powered-criminal-enterprises

https://www.anthropic.com/news/detecting-countering-misuse-aug-2025

https://www.technologyreview.com/2026/02/12/1132386/ai-already-making-online-swindles-easier/

https://www.cnn.com/2024/05/16/tech/arup-deepfake-scam-loss-hong-kong-intl-hnk

https://tech-adv.com/blog/ai-cyber-attack-statistics/

https://tech-adv.com/blog/ai-cyber-attack-statistics/

https://www.crowdstrike.com/explore/2026-global-threat-report

https://investigations.cooley.com/2024/03/05/doj-warns-of-harsher-punishment-for-crimes-committed-using-artificial-intelligence/

Blake Morrow “Not a Victimless Crime”: A Comparison of Global Regulatory Frameworks and the Future of the International Community’s Response to Artificial Intelligence Crime, 21 Loy. U. Chi. Int’l L. Rev. 119 (). Available at: https://lawecommons.luc.edu/lucilr/vol21/iss2/4